- Lifetime Solutions

VPS SSD:

Lifetime Hosting:

- VPS Locations

- Managed Services

- Support

- WP Plugins

- Concept

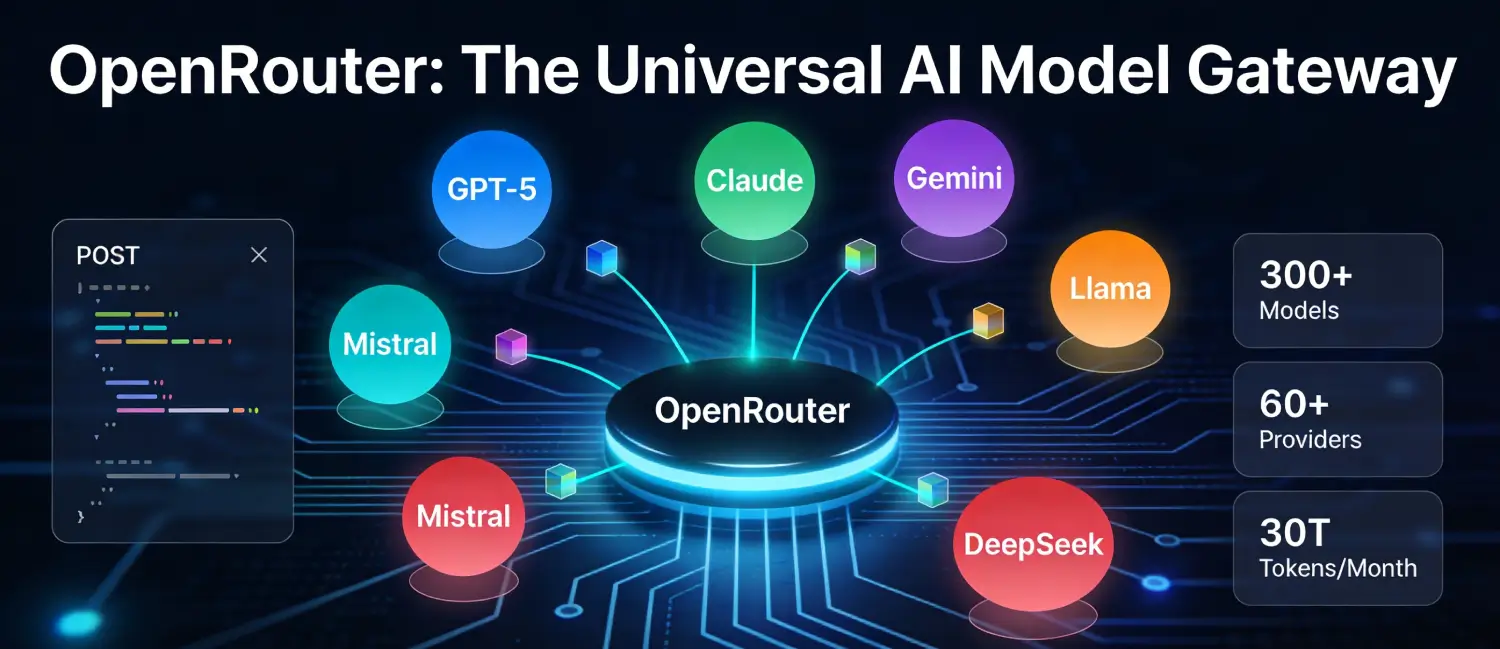

OpenRouter is a unified API platform that provides developers and businesses with access to hundreds of large language models (LLMs) from dozens of AI providers. all through a single, standardized endpoint. Rather than managing separate API keys, billing accounts, and SDK integrations for OpenAI, Anthropic, Google, Meta, Mistral, and others, developers use one API key and one consistent interface to reach their entire model catalog. The platform works as an intelligent router, sending requests to the right provider while handling authentication, billing, error recovery, and performance optimization behind the scenes

At its core, OpenRouter functions as a meta-layer on top of a fragmented AI industry, providing interoperability as a service. It creates a competitive marketplace where AI models must compete on price and performance rather than on the friction of unique API integrations. As of March 2026, OpenRouter processes over 30 trillion tokens monthly, serves 5 million+ global users, and offers access to 300+ models from 60+ active providers.

OpenRouter was co-founded in February/March 2023 by Alex Atallah, the co-founder and former CTO of OpenSea, the world’s largest NFT marketplace. After leaving his operational role at OpenSea in July 2022, Atallah turned his attention to artificial intelligence. The inspiration for OpenRouter came after he observed the emergence of open-source LLMs like Meta LLaMA and Stanford Alpaca, which demonstrated that smaller teams could create competitive AI models with minimal resources.

Atallah recognized that this would lead to a future with numerous specialized AI models and that developers would need a reliable marketplace to navigate them effectively. One of his collaborators from the browser extension framework Plasmo joined as a co-founder to launch the platform. Alex Atallah now serves as CEO and Co-Founder of OpenRouter, which he describes as “the first and largest LLM aggregator“.

OpenRouter growth has been remarkable. Annual run-rate inference spend on the platform was $10 million in October 2024 and surged to over $100 million by May 2025, a 10x increase in just seven months. Monthly customer spending grew from $800,000 in October 2024 to approximately $8 million in May 2025. Over one million developers have used its API since launch, with customers ranging from early-stage startups to large multinationals routing mission-critical AI traffic through OpenRouter.

In June 2025, OpenRouter announced the closing of a combined Seed and Series A financing of $40 million. The round was led by Andreessen Horowitz (a16z) and Menlo Ventures, with participation from Sequoia Capital and prominent industry angels. Specifically, the $12.5 million seed round was led by a16z in February 2025, followed by a $28 million Series A from Menlo Ventures in April 2025.

Following the funding, OpenRouter’s valuation reached $500 million. The investment is being used to accelerate product development, expand model coverage, and grow enterprise support. Sacra estimated OpenRouter’s annualized revenue at $5 million in May 2025, up 400% from $1 million at the end of 2024.

“Inference is the fastest-growing cost for forward-looking companies, and it’s often coming from 4 or more different models. The sophisticated companies have run into these problems already and built some sort of in-house gateway. But they’re realizing that making LLMs ‘just work’ isn’t an easy problem.” — Alex Atallah, CEO of OpenRouter.

OpenRouter processes every API request through a three-layer pipeline:

https://openrouter.ai/api/v1/chat/completions. The request is formatted identically to an OpenAI API call, meaning most developers can integrate simply by changing the endpoint URL.Step-by-Step Request Flow

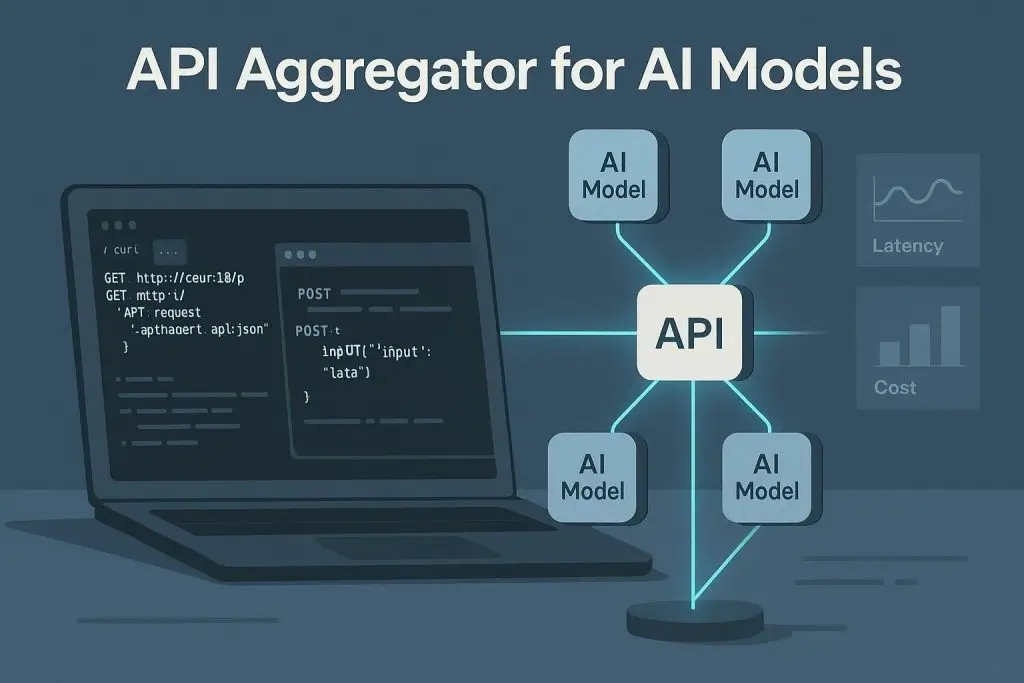

A graphic showing an API aggregator connecting to multiple AI models with latency and cost metrics

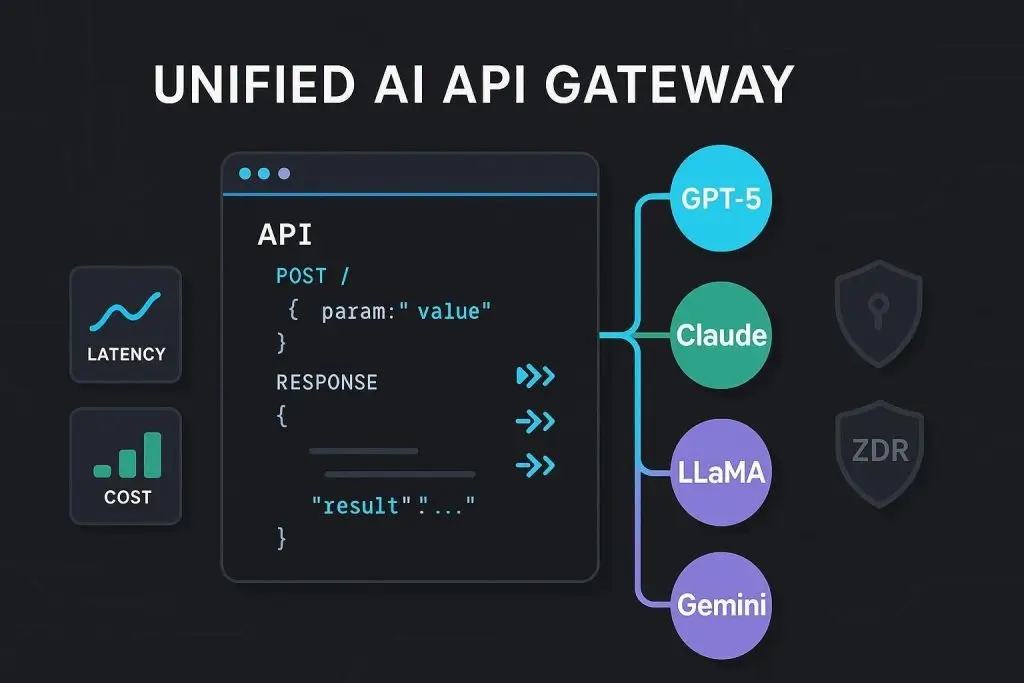

OpenRouter’s API is fully compatible with the OpenAI SDK, meaning developers can switch from OpenAI to OpenRouter simply by changing the endpoint URL and API key. There is no need to rewrite application logic, learn new SDKs, or restructure request formats. This dramatically lowers the integration barrier and makes OpenRouter a nearly frictionless drop-in replacement.

import os

import requests

OPENROUTER_API_URL = "https://openrouter.ai/api/v1/chat/completions"

api_key = os.getenv("OPENROUTER_API_KEY")

headers = {

"Authorization": f"Bearer {api_key}",

"Content-Type": "application/json"

}

payload = {

"model": "openrouter/auto",

"messages": [{"role": "user", "content": "Say hello in one sentence."}]

}

response = requests.post(OPENROUTER_API_URL, headers=headers, json=payload)OpenRouter’s routing layer makes smart decisions in real time based on multiple factors:

OpenRouter offers two powerful shortcut suffixes for quick routing control:

meta-llama/llama-3.3-70b-instruct:nitro) sorts providers by throughput (tokens per second), ensuring the fastest available provider is always selected.Advanced sorting via the provider. sort parameter is also supported with options for “price”, “throughput”, and “latency”.

LLM APIs go down. OpenRouter addresses this with a Fallback & Reliability Layer that automatically routes to the next available provider when the primary choice fails. This means production applications built on OpenRouter remain operational even during individual provider outages, without any code changes on the developer part.

OpenRouter supports Bring Your Own Key (BYOK), allowing developers to use their own provider API keys (from OpenAI, Anthropic, Google, etc.) while still benefiting from OpenRouter’s unified routing and analytics. BYOK gives users:

The cost for BYOK usage is 5% of what the same model/provider would cost on OpenRouter, and this fee is waived for the first 1 million BYOK requests per month.

Privacy-conscious developers and enterprises can enable Zero Data Retention (ZDR) mode, which restricts OpenRouter to only route requests to providers that guarantee they will not store your data. OpenRouter negotiates special data agreements with providers on behalf of its users, agreements that previously required enterprise-level contracts with individual providers.

Key privacy controls include:

For models that support it (such as Claude and Gemini), OpenRouter provides access to reasoning tokens, the model internal step-by-step thinking process before it produces a final answer. Developers can control reasoning token allocation via the reasoning.max_tokens and reasoning. effort parameters, giving full transparency into model decision-making for complex analytical tasks.

OpenRouter supports multimodal inputs wherever the underlying model allows, including text, images, and PDFs. Streaming responses, tool/function calling, assistant prefills, and standard parameters like temperature, top_p, and max_tokens are all supported across the unified interface.

OpenRouter standardizes the tool calling interface across all models and providers. It also supports integration with Model Context Protocol (MCP) servers, enabling models to interact with external systems, databases, and APIs while maintaining a standardized interface.

Because OpenRouter processes billions of requests monthly, it offers developers valuable real-world performance data, including model popularity rankings, usage statistics per category, cost comparisons, and benchmark data on model pages. The platform’s “State of AI” report (published in 2025) used 100 trillion tokens of usage data to analyze trends in the AI ecosystem.

OpenRouter model catalog is one of the largest in the industry, covering 300+ models from 60+ providers. Models span proprietary frontier models, open-source deployments, and specialized fine-tuned variants.

| Provider | Notable Models |

| OpenAI | GPT-5.4, GPT-5.2, GPT-4o, o3, gpt-oss-20b (free) |

| Anthropic | Claude Opus 4.6, Claude Sonnet 4.6, Claude Haiku |

| Gemini 3.1 Pro, Gemini 3.1 Flash Lite, Gemma 3 27B | |

| Meta | Llama 3.3 70B, Llama 3.1 series |

| Mistral AI | Mistral Small 3.2 24B, Mistral Large |

| DeepSeek | DeepSeek V3.2, DeepSeek V3.1 |

| Moonshot | Kimi K2.5 |

OpenRouter maintains a collection of 25+ free models available with rate limits, no credit card required. These include:

How Billing Works

OpenRouter operates a credit-based billing system with no monthly fees or minimum commitments:

Platform Fees

OpenRouter charges a 5.5% fee (with a $0.80 minimum) when you purchase credits. It passes through the underlying model provider’s pricing without markup on inference costs, meaning you pay the same per-token rate as going directly to the provider.

Plan Tiers

| Feature | Free | Pay-as-you-go | Enterprise |

| Platform Fee | None | 5.5% on credit purchase | Bulk discounts |

| Models Available | 25+ free models | 300+ models | 300+ models |

| Providers | 4 free providers | 60+ providers | 60+ providers |

| Rate Limits | 50 req/day | High global limits | Optional dedicated limits |

| BYOK | Not available | 1M free req/month, 5% after | 5M free req/month; custom pricing |

| Data Policy | Standard | ZDR available | Managed policy enforcement |

| Payment | — | Credit card, crypto | Invoicing options |

| Support | Community | Support SLA + Shared Slack |

Crypto Payments

OpenRouter accepts cryptocurrency payments (USDC), with a 5% fee applied to crypto transactions. This makes it accessible to Web3 developers and those who prefer decentralized payment options.

One of the most common questions is: when should you use OpenRouter instead of going directly to a provider?

| Factor | OpenRouter | Direct Provider API |

| Integration Complexity | Single API key, unified endpoint | Separate SDK per provider |

| Model Access | 300+ models, all providers | Only that provider’s models |

| Latency | ~50–150ms routing overhead | Lowest possible latency |

| ZDR / Privacy | Negotiated for all providers | Enterprise agreement required |

| Cost | Provider cost + 5.5% credit fee | Direct provider rates |

| Fallback / Uptime | Automatic multi-provider failover | Single provider SLA only |

| Billing | Unified single invoice | Multiple invoices |

| Latest Features | Slight lag for new model integrations | Immediate access |

| Vendor Lock-in | None, switch models freely | Provider-specific |

Best for OpenRouter: Teams running multiple models, startups prototyping fast, applications needing high availability, privacy-conscious users needing ZDR, and developers experimenting with model selection.

Best for Direct API: Latency-critical applications where every millisecond counts, enterprises already locked into a single provider’s ecosystem, and use cases requiring bleeding-edge features at launch.

API Endpoint and Compatibility

OpenRouter exposes a single API endpoint: POST /api/v1/chat/completions, which is fully compatible with the OpenAI SDK. Request formatting follows the OpenAI standard using fields like messages, prompt, max_tokens, and temperature. Additional advanced parameters include transforms, models, tools, and providers.

Edge Computing and Low Latency

To minimize routing overhead, OpenRouter uses Cloudflare’s edge computing infrastructure to process and route requests as close to the user as possible. This reduces the latency penalty of the routing layer to typically 50–150ms — imperceptible for most use cases.

Exacto Endpoints for Quality Assurance

With billions of requests flowing through the platform monthly, OpenRouter has accumulated a proprietary dataset about which providers actually deliver better quality for the same model. The “Exacto” endpoints leverage this intelligence to route requests exclusively to curated providers with measurably better tool-use success rates — a feature unique to OpenRouter’s position in the ecosystem.

Model Variant System

OpenRouter variant suffix system provides fine-grained routing control:[4]

OAuth PKCE Integration

For consumer applications, OpenRouter supports OAuth PKCE (Proof Key for Code Exchange), allowing developers to let their users bring their own OpenRouter accounts to third-party applications in a secure single sign-on flow.

Security and Privacy

OpenRouter has invested significantly in enterprise-grade privacy controls:

OpenRouter negotiated ZDR agreements on behalf of all its users, including with providers like OpenAI, covering privacy protections that previously required enterprise-level contracts and legal review.

Ecosystem and Integrations

OpenRouter has built a broad ecosystem of integrations:

The platform also maintains an active Discord community and GitHub presence for developer support and open-source integrations.

The State of AI: OpenRouter Industry Insights

Because OpenRouter sits at the intersection of hundreds of models and millions of users, it has a unique vantage point on the AI industry. Its 2025 “State of AI” report, based on 100 trillion tokens of usage data, revealed several key trends:

Getting Started with OpenRouter

Quick Setup in 4 Steps

https://openrouter.ai/api/v1.First API Call (Python)

import os, requests

headers = {

"Authorization": f"Bearer {os.getenv('OPENROUTER_API_KEY')}",

"Content-Type": "application/json"

}

payload = {

"model": "openrouter/auto",

"messages": [{"role": "user", "content": "Hello, what can you do?"}]

}

response = requests.post(

"https://openrouter.ai/api/v1/chat/completions",

headers=headers,

json=payload

)

print(response.json()["choices"]["message"]["content"])Competitive Landscape

OpenRouter operates in a growing category of LLM gateway and routing platforms. Key competitors and alternatives include:

| Platform | Approach | Key Differentiator |

| OpenRouter | Unified API marketplace, 300+ models | Largest model catalog; Exacto quality routing; ZDR for all |

| Portkey | LLM observability + gateway | Deep observability and guardrails focus |

| Martian Router | ML-based model selection | Neural network routing for quality optimization |

| Unify | Joint cost/quality/speed optimization | Neural net-based routing by Daniel Lenton |

| AWS Bedrock | Cloud-native LLM access | Deep AWS integration, enterprise compliance |

| Azure AI | Microsoft ecosystem integration | Enterprise Microsoft stack alignment |

OpenRouter competitive moat lies not just in its API convenience, but in the intelligence layer built from billions of real-world requests, proprietary data on which providers and models actually perform best for which tasks.

OpenRouter has established itself as the default backbone for multi-model AI infrastructure, solving the real-world problem of managing an increasingly fragmented AI provider landscape. From its origins as a simple API gateway in early 2023 to a $500 million-valued platform processing 30 trillion tokens monthly and serving 5 million+ users, OpenRouter has proven that developers want a neutral, high-performance layer between their applications and the growing universe of AI models.

For content creators, developers, and enterprises alike, OpenRouter offers a compelling proposition: access to every major AI model, automatic reliability, transparent pricing, privacy controls, and the freedom to switch or combine models without rebuilding infrastructure. As AI inference becomes the dominant operational cost for modern software companies, OpenRouter’s role as the universal LLM router is only set to grow.

Hassan Tahir wrote this article, drawing on his experience to clarify WordPress concepts and enhance developer understanding. Through his work, he aims to help both beginners and professionals refine their skills and tackle WordPress projects with greater confidence.