- Lifetime Solutions

VPS SSD:

Lifetime Hosting:

- VPS Locations

- Managed Services

- Support

- WP Plugins

- Concept

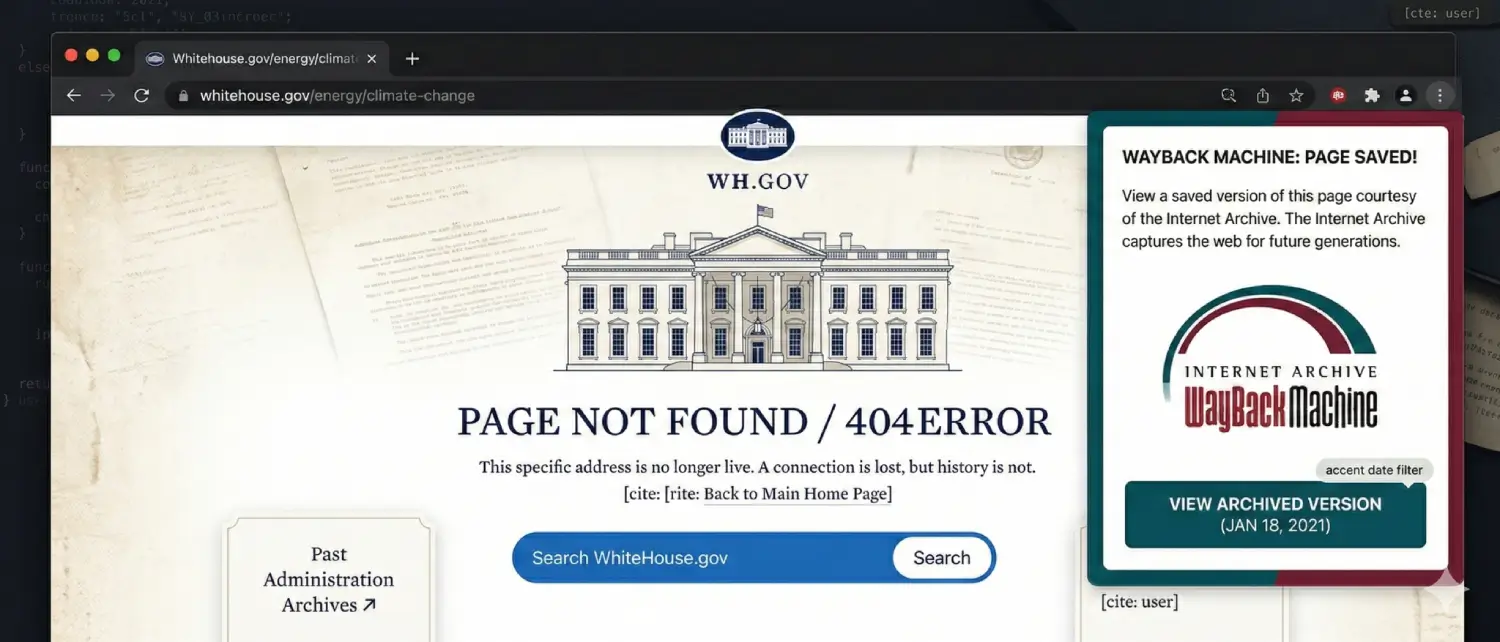

Since the beginning of the World Wide Web, the internet has been fragile. Online information doesn’t last forever; websites get updated, domains expire, and servers shut down. Over time, links stop working, causing a problem known as “link rot.” When this happens, pages disappear, and valuable information is lost.

To prevent this digital loss, the Internet Archive, a San Francisco-based nonprofit, was created by Brewster Kahle and Bruce Gilliat. Its goal is simple but vital: to preserve online content so future generations can access and learn from it.

In 2001, they launched their most famous tool to the public: the Wayback Machine. Named after the fictional time-traveling “WABAC machine” from the 1960s cartoon The Bullwinkle Show, it allows users to travel back in time to see how websites looked in the past.

Over the years, the Internet Archive has grown incredibly. It crossed an unbelievable milestone: over one trillion archived web pages, totaling more than 100 petabytes of data. It is now one of the largest digital libraries in human history, serving as a mirror of modern culture and playing a key role in academic research, legal evidence, journalism, and website recovery.

Ingesting petabytes of data requires a globally distributed infrastructure. The Wayback Machine aggregates data from hundreds of daily web “crawls.”

Archivists need to save more than just what a webpage looks like; they need technical background details (like server responses and timestamps) to ensure the archive is accurate and trustworthy.

They do this using the Web ARChive format (WARC), a global standard file format. Think of WARC as a special digital container that is compressed to save space but designed so the system can quickly pull out a single page without unpacking the whole file.

A single WARC file contains multiple record types:

| WARC Record Type | Function & Metadata Content |

| warcinfo | The header record identifies it as a WARC container, detailing when and how it was created. |

| request | Preserves the exact request sent by the crawler to the live server (the audit trail). |

| response | Contains the live server’s complete, unaltered response (status codes, HTML, images, etc.). |

| metadata | Holds secondary information generated during the crawl (e.g., subject classifiers, language). |

| revisit | A deduplication tool. If a page hasn’t changed, it points to the previous capture to save space. |

| conversion | Documents if a file was altered for modern viewing (e.g., converting old Flash to HTML5). |

| continuation | Allows a single large document to be read across multiple WARC records if interrupted. |

(Note: The Archive also creates WAT files for technical metadata research and WET files, which contain only plain text, useful for training AI systems and language analysis.

When you search for a URL, you are presented with a calendar interface that maps the history of that web page using color-coded dots.

| Calendar Dot Color | Archival Status Meaning |

| Blue | Successful Capture: The crawler got a 200 OK response. This contains actual webpage content. |

| Green | Redirection: The crawler hit a redirect (3xx status). Vital for tracking domain changes. |

| Orange | Client-Side Error: The page was missing or blocked (4xx status, like a 404 Not Found). |

| Red | Server-Side Failure: The target server was down or overloaded during the crawl (5xx error). |

Combating Disinformation: Because the archive preserves both truth and falsehood, it now partners with fact-checkers (like Politifact). If an archived page contains debunked claims or coordinated disinformation, the Wayback Machine injects a yellow context banner at the top to warn the user, preserving the historical record while mitigating harm.

For developers, the Wayback Machine uses proprietary two-letter suffixes added to the URL timestamp to change how a page is delivered:

| Suffix | Proxy Engine Behavior |

| id_ | Delivers raw, unadulterated code exactly as captured, bypassing the Wayback navigation toolbar. |

| if_ | Formats delivery specifically for framed/iframed content without recursive toolbars. |

| im_ | Serves the payload explicitly as image data, bypassing text-parsing. |

| cs_ | Forces delivery with a text/css type to ensure modern browsers don’t block stylesheets. |

| js_ | Forces the delivery of raw JavaScript payloads to ensure historical scripts execute properly. |

| oe_ | Removes the overlay for embedded objects/media to prevent conflicts with legacy media players. |

The Wayback Machine is the ultimate disaster recovery tool for businesses and bloggers whose sites vanish due to hacking, expired hosting, or server failures.

| Recovery Methodology | Speed | Tech Skill Required | Output Quality |

| Manual Extraction | Very Slow | High (HTML, CSS, Regex) | Prone to human error; requires manual code cleaning. |

| Open-Source CLI | Fast | Moderate (Terminal, Ruby) | Excellent raw data retrieval; recreates directory structures. |

| Commercial SaaS | Instantaneous | Low (Web Interface) | Superior; auto-cleans code, fixes links, and can integrate directly with modern CMS platforms like WordPress. |

The SPA Limitation: Modern Single Page Applications (SPAs) built on frameworks like React or Vue are difficult to recover. Because they rely on a browser to build the page via JavaScript after it loads, older crawlers often only saved a blank white HTML shell.

The Archive is fighting link rot automatically through major ecosystem partnerships:

The Wayback Machine has evolved from a library into a forensic witness. Because its crawlers are automated and unbiased, U.S. and international courts increasingly accept Wayback Machine captures as legitimate legal evidence in patent, copyright, and criminal disputes.

However, operating this massive database comes with modern challenges:

The Wayback Machine of the Internet Archive is a major achievement of software technology and cultural insight. It has built a digital history of its own by continually harvesting the web, standardizing storage with the WARC ecosystem, and creating new sophisticated proxy routing methods. Its importance can hardly be overestimated, as it is not only the nostalgia that it grants but also the incurable basis of legal verification, journalistic responsibilities, and the complete forensic restoration of the lost digital resources.

Although the organization is under intense pressure due to the advanced cyber threats and the collateral damage that the AI copyright wars have caused, its ability to continuously innovate, as shown by the SPN2 API, native CMS integrations, and partnerships with major search engines, shows that the organization is committed to its mission. The fact that there are more than one trillion web pages preserved will ensure that, regardless of the way the internet is volatile in nature, the background knowledge, communications, and culture of the modern world will remain intactfore future generations.

Hassan Tahir wrote this article, drawing on his experience to clarify WordPress concepts and enhance developer understanding. Through his work, he aims to help both beginners and professionals refine their skills and tackle WordPress projects with greater confidence.