- Lifetime Solutions

VPS SSD:

Lifetime Hosting:

- VPS Locations

- Managed Services

- Support

- WP Plugins

- Concept

For years, SEO was treated as a battle of keywords, backlinks, content length, and technical optimization. While those elements still matter, the modern search landscape has become infinitely more complex.

The internet is no longer made solely of human users reading pages and clicking links. It is now saturated with crawlers, scrapers, automated scripts, AI agents, and headless browsers. For website owners, hosting providers, SaaS companies, and infrastructure teams, this fundamental shift changes the entire SEO conversation.

Search visibility is no longer just about publishing content. It is about proving trust, protecting user signals, keeping analytics clean, and defending your platform from synthetic traffic that distorts performance data.

The recent Google Search API document leak and the U.S. Department of Justice (DOJ) antitrust trial materials brought renewed attention to the most critical currency in modern SEO: user interaction data. While Google clarified that the leaked documents may lack context, they irrefutably reinforced how heavily the search engine relies on user behavior, clicks, and interaction signals to measure quality.

This is exactly where systems like NavBoost enter the discussion.

NavBoost is not an SEO rumor; it is a documented reality. It appeared extensively in public materials from the DOJ case involving Google.

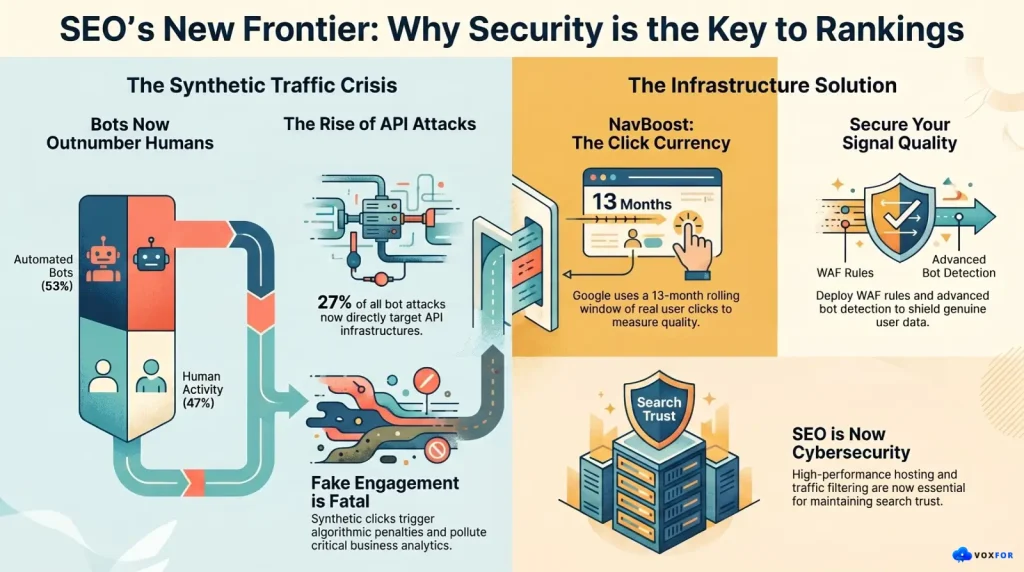

In those trial exhibits, NavBoost is described as a system that relies on user-side data—specifically clicks—to help Google improve search result quality. Internal documents revealed that Google learned from users “in the form of clicks,” and NavBoost was utilized as a powerful click-exploitation system to memorize interactions over a 13-month rolling period.

Does this mean CTR (Click-Through Rate) alone controls rankings? No. Does it mean anyone outside Google knows the exact mathematical weight of a click? Absolutely not. Google’s algorithms evaluate hundreds of billions of pages using a multitude of complex systems.

However, the accurate conclusion is undeniable: User interaction data matters deeply, but it exists inside a highly sophisticated, manipulation-resistant ecosystem.

This is a critical realization for serious businesses. SEO cannot be reduced to faking clicks or manufacturing shallow engagement. In fact, the more search engines rely on genuine user behavior, the more dangerous fake or low-quality traffic becomes for your infrastructure and your rankings.

The “old internet” was easy to measure. A visitor arrived, read a page, submitted a form, or left. Today, the majority of web traffic isn’t human at all.

According to the 2026 Imperva Bad Bot Report, automated traffic has officially broken new records, accounting for 53% of all web traffic globally. Human activity has fallen to a minority share of just 47%. The report also highlights that 27% of bot attacks are now targeting APIs directly, driven largely by the massive explosion of AI agents interacting with the web at machine speed.

Similarly, platforms like Cloudflare have had to radically update their bot management frameworks in 2026 simply to handle the sheer volume of headless browsers and LLM scrapers consuming HTML requests.

For business owners, this creates a severe operational blind spot: Not all traffic is equal.

A website can show spiking traffic charts while generating zero real leads. A page can appear highly engaging in Google Analytics, while the data is actually polluted by automation.

This is no longer just a marketing issue. It is an infrastructure issue.

Some webmasters look at systems like NavBoost and reach the wrong conclusion. They assume that if clicks matter, they should deploy bots to manipulate dwell time and engagement metrics.

This is a fatal mistake.

Google’s spam policies explicitly target techniques designed to deceive users or manipulate Search systems. Modern SEO is not about simulating user signals; it is about earning them and protecting them. Fake engagement creates catastrophic risks for a business:

If ranking systems learn from real users, your long-term strategy must focus on attracting genuine intent and shielding your site from synthetic noise.

This is the evolution most businesses have entirely missed. Historically, SEO and Cybersecurity operated in silos. The SEO team worried about schema and page speed; the security team worried about firewalls and DDoS attacks.

That separation is gone.

If bots can pollute traffic data, trigger fake visits, scrape premium content, overload servers, and distort engagement patterns, then your SEO performance is directly tethered to your infrastructure security. A modern, resilient SEO strategy must now include:

A weak hosting environment might keep your website online, but it fails to protect you from the noisy, malicious traffic that confuses search engines and ruins analytics. A strong infrastructure architecture actively helps you understand exactly what is happening on your platform.

Google does not rank websites higher simply because they pay for expensive servers. However, infrastructure dictates the foundational signals that both real users and search engine crawlers experience.

A reliable, enterprise-grade hosting platform guarantees:

When a server is unstable or drowning in bot traffic, the business loses more than just its search ranking. It loses trust. For competitive sectors like SaaS, AI development, and eCommerce, infrastructure must act as an intelligent filter—separating genuine customers from automated noise.

The future of SEO is moving aggressively toward Trust.

Clicks matter. Links matter. But all of these signals are amplified exponentially when they originate from a verified, authoritative entity. You cannot build long-term visibility on cheap tricks. You need a deep platform foundation:

A page that simply repeats generic marketing fluff is weak. A page that dissects a real market issue, explains architectural risks, and empowers users to make better decisions is a fortress.

The correct response to NavBoost and the rise of AI bots is maturity, not panic. Stop obsessing over daily rank tracking and start auditing your signal quality.

Build Content That Solves Deep Problems: Do not publish generic AI filler. Create content that compares architectures, explains technical risks, and serves real user intent.

Protect Analytics from Synthetic Noise: Segment your traffic. Discard data from suspicious ASNs and unnatural user-agents.

Netanel Siboni is a technology leader specializing in AI, cloud, and virtualization. As the founder of Voxfor, he has guided hundreds of projects in hosting, SaaS, and e-commerce with proven results. Connect with Netanel Siboni on LinkedIn to learn more or collaborate on future project.