- Lifetime Solutions

VPS SSD:

Lifetime Hosting:

- VPS Locations

- Managed Services

- Support

- WP Plugins

- Concept

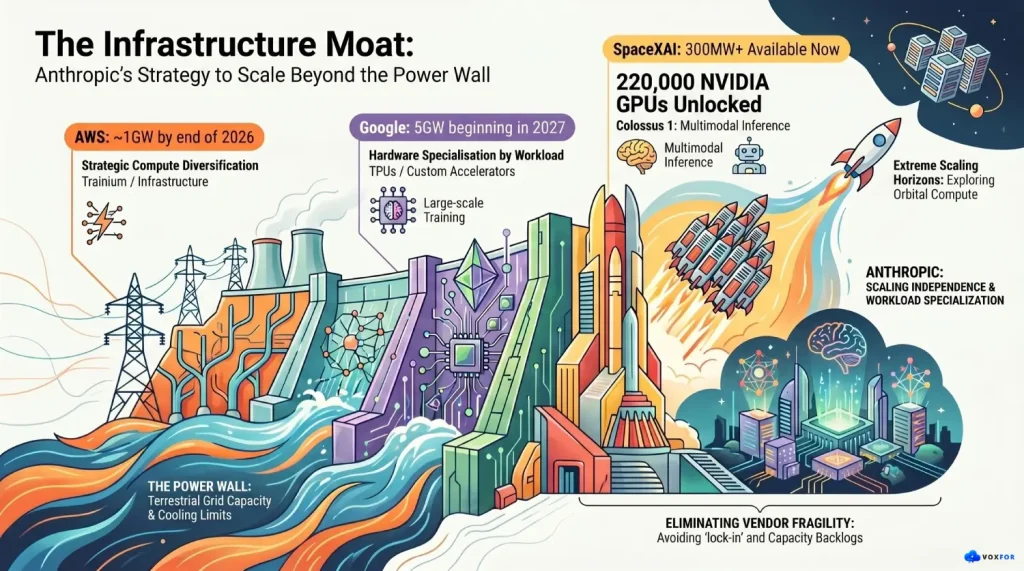

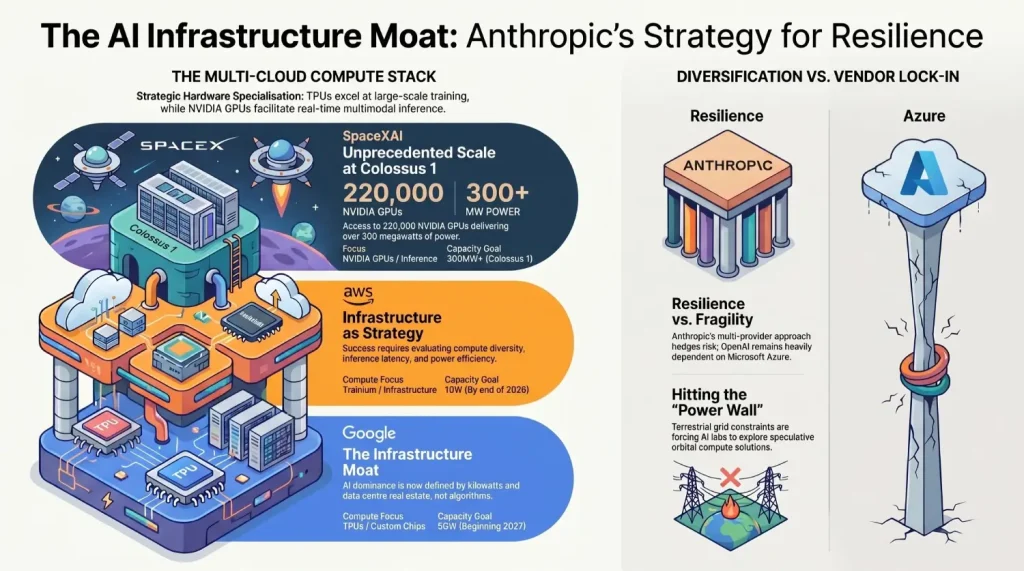

Anthropic’s recent deal with SpaceX foraccess to all compute capacity at SpaceX’s Colossus 1 data center, over 220,000 NVIDIA GPUs delivering 300+ megawatts, marks a pivotal shift in AI infrastructure strategy. This GPU-heavy powerhouse complements Anthropic’s Google TPU reliance for training, unlocking multimodal inference at significant scale. Amid compute shortages, it positions Claude against OpenAI’s Azure dominance and signals how constrained the cloud AI market has become.

The deal’s full context appears tied to Elon Musk’s broader infrastructure consolidation around SpaceX, xAI, and Colossus. Reports indicate that xAI is being folded into SpaceXAI If this structure holds, monetizing Colossus capacity through enterprise cloud contracts could help SpaceX validate itself as an AI infrastructure provider beyond rockets, satellites, and Starlink. Anthropic becomes the flagship customer, lending immediate credibility while securing capacity at scale.

AI firms face a fundamental “power wall”: training and inference demands gigawatts, but terrestrial grids and available capacity lag behind innovation. Anthropic, like rivals, scales via cloud hyperscalers, AWS for custom accelerators, Google/Broadcom for TPUs, now SpaceXAI for NVIDIA GPUs. OpenAI commits billions toMicrosoft Azure, fueling 45% of its backlog despite $250B infrastructure pledges.

The reality is stark: compute capacity has become the true constraint in AI development, not algorithmic innovation. Companies that secure diverse, resilient infrastructure will shape the competitive landscape.

| Provider | Key Partner | Compute Focus | Capacity Notes |

| AWS | Anthropic | Trainium / AI infrastructure | Nearly 1GW by end of 2026 |

| Google/Broadcom | Anthropic | TPUs / custom AI accelerators | 5GW beginning in 2027 |

| SpaceXAI | Anthropic | NVIDIA GPUs | 300MW+, 220K GPUs at Colossus 1 |

| Microsoft Azure | OpenAI | GPU-heavy AI cloud capacity | ~45% of Azure backlog tied to OpenAI |

TPUs remain powerful for large-scale ML training, while NVIDIA GPUs offer broader flexibility and mature software support for multimodal inference, generative video, fine-tuning, and real-time AI workloads. This isn’t a weakness of TPUs, it’s a specialization trade-off. Google’s chips optimize tensor math for training; NVIDIA’s GPUs balance training, inference, and rapid iteration. Anthropic’s diversified stack across AWS, Google, and SpaceXAI hedges infrastructure risk entirely.

Anthropic’s strategy shows sophisticated hedging: nearly 1GW from AWS by year-end, 5GW from Google beginning 2027, plus SpaceXAI GPU capacity = resilient, genuinely diversified compute infrastructure. This contrasts with OpenAI’s Azure lock-in, which carries vendor dependency risks; Microsoft manages competing demands, Copilot integration, internal R&D, and customer AI needs.

The winners in this infrastructure race will be providers blending custom chips, cloud flexibility, and strategic partnerships, AWS and Oracle refuse single-vendor commitments, positioning themselves well.

This escalation demonstrates how “platform thinking”, treating infrastructure as strategic advantage, extends far beyond SEO and cybersecurity. AI success ties fundamentally to infrastructure resilience, not algorithms alone. Enterprises choosing AI platforms must evaluate:

Anthropic has expressed interest in developing gigawatts of orbital AI compute capacity, signaling how extreme the industry’s power, land, and cooling constraints have become. While orbital data centers remain speculative, the fact that serious AI labs explore them underscores real bottlenecks: terrestrial real estate, grid capacity, and water availability for cooling are already limiting factors in 2026.

Anthropic’s moves reinforce a structural shift: GPU/TPU compute infrastructure has become AI’s ultimate competitive moat. The era where novel algorithms alone drove advantage is concluding. What matters now is reliable, diversified access to raw computational power, blending hyperscaler resources across AWS, Google, and emerging players like SpaceXAI, hardware vendor partnerships, and long-term power agreements. The race for AI dominance is, fundamentally, a race for kilowatts, GPUs, TPUs, and data center real estate.

SpaceX’s willingness to monetize Colossus through Anthropic, positioning itself as an independent cloud vendor, signals that the 2026 AI infrastructure landscape is no longer dominated by a handful of tech giants. New players with capital, power access, and engineering expertise are entering the market. The winners won’t be determined by model architecture alone, but by infrastructure strategy, access to the power grid, and the ability to diversify across multiple compute providers and chip architectures.

Netanel Siboni is a technology leader specializing in AI, cloud, and virtualization. As the founder of Voxfor, he has guided hundreds of projects in hosting, SaaS, and e-commerce with proven results. Connect with Netanel Siboni on LinkedIn to learn more or collaborate on future project.